In recent times, we have seen widespread momentum of AI adoption which is no surprise given the tangible benefits of using AI in the workplace such as being an effective tool for upskilling, conveniently drafting emails, and enhancing efficiency with simple and repetitive tasks. However, the uncomfortable truth is that many of us do not take a moment to pause and ask ourselves important questions such as:

- What might be some trade-off that comes with such a reliance on this technology?

- What about the dark side of artificial intelligence and the potential risks that need to be considered before rolling out on a wider scale?

- Where should organizations start when it comes to creating guardrails for employees to reference when using AI in the workplace?

In my opinion, there are three main dangers of AI that organizations must consider and assess accordingly to find their answer to ‘Is AI good or bad?’.

The dangers of AI

1. Ethical concerns

Several business ethics scholars have raised the concern that AI can erode people’s moral judgment. Cao and colleagues found that as AI-related knowledge and abilities become an integral part of an employee, they can become more entitled and, in turn, more likely to enact unethical behavior. However, if such AI knowledge is more universally known by other colleagues, it is less likely that unethical behavior will occur.

Similarly, in a study by Zhao and colleagues, the researchers found that the use of AI can result in employees engaging in deviant behaviors at work and showing lenient moral judgment toward others who do the same. This phenomenon occurs because when people use AI at work, they are exposed to a different set of moral codes (e.g., what are right and wrong ways of using AI) which can change the way they perceive what is morally right or wrong. However, they also found this effect can be mitigated if an individual possesses a strong set of moral values and principles. Thus, as AI is increasingly used at work, it is important that employees and their organizations develop their AI-literacy and establish a strong set of ethical standards to mitigate these dangers of AI use.

2. Carbon footprint concerns

If you were to ask an environmentalist ‘is AI bad?’, chances are they would respond with a resounding yes. While much of how we use it happens behind a screen, the actual work taking place behind the scenes is computationally, energetically, and environmentally demanding.

A dark side of AI systems is that they operate within large data centers filled with specialized hardware that requires substantial amounts of electricity coupled with continuous cooling systems that consume significant amounts of water. In a systematic review of AI’s environmental footprint, Oliveira and colleagues concluded that energy consumption and carbon emissions occur across the entire AI lifecycle, including training, deployment, and hardware production.

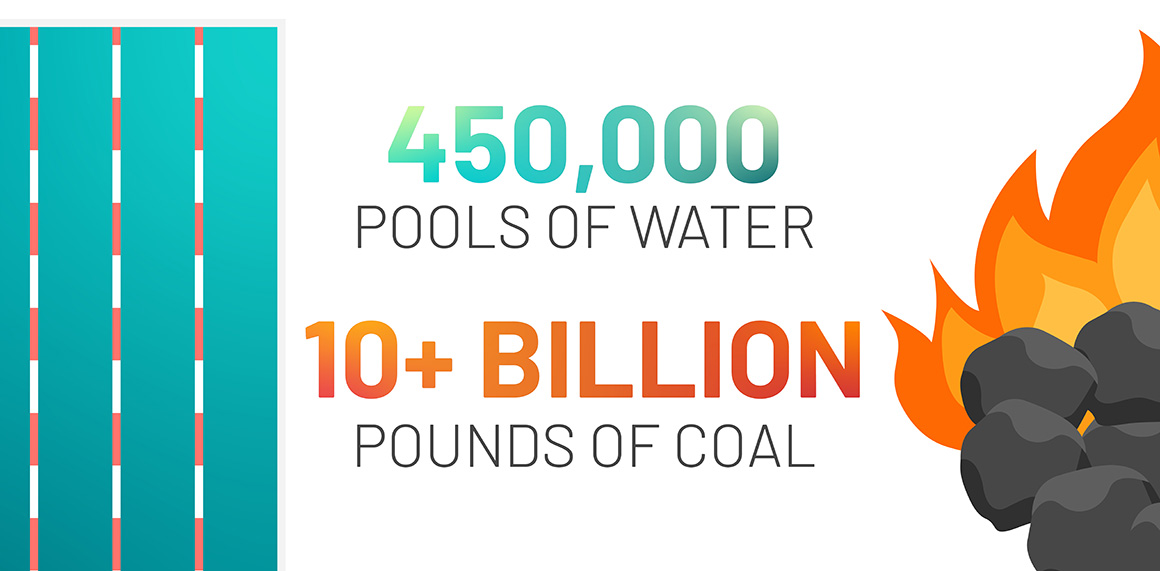

Recent projections further illustrate the scale of this impact, estimating that additional deployment of AI servers across the United States alone could generate an annual water footprint ranging from 731 to 1,125 million cubic meters. This would add 24 to 44 million metric tons of CO₂ emissions annually between 2024 and 2030. To put that into perspective, this is roughly equivalent to 450,000 Olympic-sized swimming pools of water on the high end and emissions comparable to burning tens of billions of pounds of coal.

These environmental costs are not limited to the initial training of large AI models. While model training is often highlighted for being energy-intensive, the everyday use of AI systems also contributes meaningfully to total emissions. As these tools become embedded in routine tasks such as drafting emails, summarizing documents, and generating reports, the cumulative energy demand increases accordingly, thereby expanding the dangers of AI to the livelihood of our planet.

3. Cognitive concerns

One additional dark side of AI is how its use can lead to deteriorated critical thinking skills and abilities. These concerns stem from its powerful utility to swiftly execute complex tasks that previously required more effort from humans.

Researchers at Cornell University found those who use AI to help with writing tasks exhibited lower ownership of their work and even had difficulty recalling what they wrote. Over the course of four months, these individuals also demonstrated lower performance on various other outcomes. For instance, the essays produced by those writing with the support of using AI were read to be similar to one another, raising the concern of whether AI can produce novel and innovative outputs. At the neurological level, those who write with AI support also demonstrated a reduction in mental activities.

Similarly, another study found that the frequency of ‘offloading’ tasks to AI is negatively related to critical thinking ability. Furthermore, Fernandes and colleagues found that when people use AI, regardless of whether they are low or high performers, they tend to overestimate their own performance. This is particularly concerning as the overestimation of one’s performance is typically observed in low performers but not their high performing counterparts.

The evidence suggests that arguably one of the biggest dangers of AI usage is the detrimental effect on our cognitive abilities. This highlights a critical need to identify solutions on how we can leverage the efficiencies of AI without sacrificing our cognitive ability in the process. Which leads me to my next point…

Researching the cognitive effects of AI use

Given the potential for ethical concerns, cognitive decline, and a negative impact on the environment, is it all doom and gloom when it comes to evaluating the dangers of AI? Not necessarily. Dell’Acqua and colleagues found that the simple act of saving one’s AI generated content resulted in higher performance. This finding implies that users may be better able to retain the content or knowledge gained from AI tools, therefore preserving their cognitive ability skills to some extent. In addition, it also reduces the number of times that people use AI tools, potentially limiting the environmental toll associated with AI use.

Igor Kabashkin proposed a conceptual model that describes how employees can grow or decline from a cognitive perspective. Kabashkin’s model suggests that when using AI, users should pay attention to how often they delegate and rely on the AI tool for their work. Excessive delegation and overreliance are likely to result in a cognitive drop while some moderate levels of use are effective to one’s cognitive growth and sustainability in the long run as seen in the below table. Thus, as we all began to leverage AI in our work, it is important that we all proceed with using it in a cautious and balanced manner.

Description of the cognitive sustainability index factors from Kabashkin (2025)

| Factor | Definition | Cognitive Impact |

|---|---|---|

| Autonomy | One’s ability to formulate tasks and make independent decisions | Positive |

| Reflection | The capacity for one to self-evaluate and critical reassessment | Positive |

| Creativity | One’s ability to maintain originality and synthesis of new ideas | Positive |

| Delegation to AI | The frequency of one transferring problem-solving to AI systems | Negative |

| Reliance on AI | The degree of uncritical trust in automated output | Negative |

Adapted from Table 3 of Kabashkin (2025)

The critical role of AI governance

This brings us back to the original question: Is AI good or bad? As we are still in the early phases of AI implementation, there is plenty of room for experimentation to get to a point where we all can leverage it effectively. But first, it is of utmost importance that organizations establish a code of ethics to support the development of an effective AI governance system.

The American Psychological Association and Society of Industrial Organizational Psychology have established a good and strong starting point that organizations may adopt. For instance, their ethical frameworks consider important factors such as transparency, fairness, safety, and privacy. Building on these principles allows organizations to create AI systems that not only comply with regulatory expectations but also earn the trust of employees, customers, and stakeholders.

In addition, Nathaniel Fast, a professor at the University of Southern California Marshall School of Business, suggested that organizations using AI need to monitor and address the following questions to ensure it is used ethically:

- What is the purpose of this AI tool?

- What are you trying to achieve by using this AI tool?

- Is the AI tool helping you achieve that purpose and how can you measure it?

- What are the side effects and harms that could result from using this AI tool?

Subsequently, organizations can develop learning modules to increase employees’ AI literacy. This includes educating them on how the AI tool works, what it can (or cannot) be used for, and how to use them most effectively.

Counteracting the dark side of AI: Is AI bad or good?

When asked if AI is good or bad, the answer truly is ‘it depends.’ It’s a powerful tool whose impact is largely defined by how we choose to design, deploy, and regulate it. The emerging evidence suggests that the dark side of AI can subtly influence ethical behavior, shape how we think, and carry environmental costs that are often invisible to end users.

These trade-offs do not mean we should reject AI altogether. Instead, they call for greater intentionality. Organizations must move beyond asking whether AI can improve efficiency and begin asking whether it should be used in particular contexts, at what scale, and with what safeguards in place. Developing clear governance structures, strengthening AI literacy, encouraging reflective and moderate use, and incorporating environmental accountability into adoption decisions are all critical steps forward. As AI continues to evolve, the real challenge is not simply keeping up with the technology but ensuring that human judgment, ethical standards, and sustainability remain at the center of its use.

Artificial intelligence in talent management

The potential for Artificial Intelligence (AI) to significantly enhance how we hire and develop talent is incredibly exciting.

But let’s be clear, the results to date haven’t always been positive.

In this whitepaper, we provide a balanced and transparent overview of the pros and cons of using AI in talent management – highlighting where our industry can benefit from its powerful analytical potential, and flagging areas where AI techniques should be approached with caution.